System Architecture

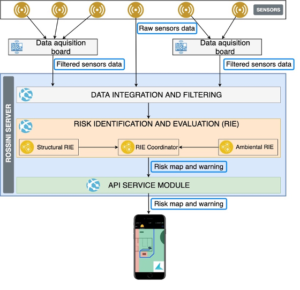

The diagram shows the system architecture from a navigation system implementation perspective. The main components are:

- A mobile client running the application to guide the worker during emergencies;

- The ROSSINI server, that acquires sensor data and uses it to compute the combined risk-map (i.e., a data structure representing the risk of transiting in each area of the plant); the risk-map is then transmitted to the client;

- A set of sensors that communicate with the ROSSINI server either directly or through a data acquisition board.

The ROSSINI server includes various modules, in particular:

- Data integration & filtering module: receives raw data from the sensors, integrates and filters them before providing them to the RIE module.

- RIE: this module integrates the structural and environmental RIEs combining the risk and creating the risk map.

- The API service module has the objective of providing the risk-map to the mobile client, also raising a warning when a potentially dangerous situation occurs.

Mobile app

Two main problems emerged in the analysis of the navigation app: 1) how to reliably compute the precise user location (which includes position and orientation); and 2) how to interact with the user to effectively guide them along the safest route. After considering the state of the art, two solutions addressing these problems were devised:

- Positioning: a hybrid solution based on a combination of indoor and outdoor positioning techniques is used. While the outdoor solution uses the operating system APIs (which combine GNSS, WiFi and cellular positioning), the indoor positioning technique is an ad-hoc solution based on visual markers and visuo-inertial navigation. This solution has the advantage of not requiring external radio signals (which might be unavailable in emergency situations) and makes it possible to compute the user’s orientation, in addition to their location. Also, this solution relies on augmented reality, which is implemented in stable and well-maintained libraries.

- Navigation instructions: a solution based on both allocentric and egocentric maps were designed. When the user’s location is known with high precision, the system shows navigation information using an ecocentric map, also using augmented reality to better guide the user. In case the user location is not known with high precision, the system shows the map with an egocentric approach. In both cases, a multi-modal approach is adopted, combining visual information with audio and haptic information. In particular, the app adopts sonification techniques derived from the literature in the field of assistive technologies for people with visual impairments.

The risk-aware route is computed on the client starting from two data structures: the risk-map (see above) and the routes-graph. The latter is a directed graph that represents all the walkable paths; this graph models the area into discrete cells and considers physical characteristics of the environment, like the walls and the emergency door (that can be traversed in one direction only). Starting from an area planimetry, an external app (i.e., not mobile) discretizes the space into cells and creates a node for each cell as well as the connections between nodes (e.g., two adjacent nodes are connected if there is not a wall between them). The diagram below shows an example: black pixels represent walls, while the green arrows start from the centre of a cell and indicate which adjacent cells are connected. Red segments represent emergency doors that can be traversed in one direction only, while grey segments represent doors that can be traversed in both directions. This graph is then serialized as a file and transferred to the mobile device, where it is loaded when the app runs.

When the mobile app runs, it receives a new risk map as soon as it is available on the server. Once a risk map is received, the mobile app updates the weight of nodes in the routes-graph (e.g., if an area in the risk-map has a high risk, the nodes in the routes-graph contained in that area are updated to have a high weight). Then, using an adaptation of the A* algorithm the best route is computed from the current user position to each safe area and eventually the best route among them is selected. With “best route” we intend the route that minimizes the maximum weight, which is different to the typical implementation of A*, where the aim is to minimize the sum of the weight along the route.